A Look Inside a QA Team’s MagicPod Workflow

While many teams would benefit from implementing test automation, it comes with challenges. To avoid issues like high maintenance costs and unstable test executions, a QA team must leverage different strategies to refine their processes for efficiency.

At Kaipoke, the QA team has successfully optimized their MagicPod workflow with several strategies. Thanks to an article by Yusaku Nakamura, a leader within the Kaipoke QA team, we get an inside look at their testing workflow. His insights offer valuable takeaways that MagicPod users can apply to enhance their own testing processes. Let's dive in!

The original article is available here in Japanese.

Scope of Automation

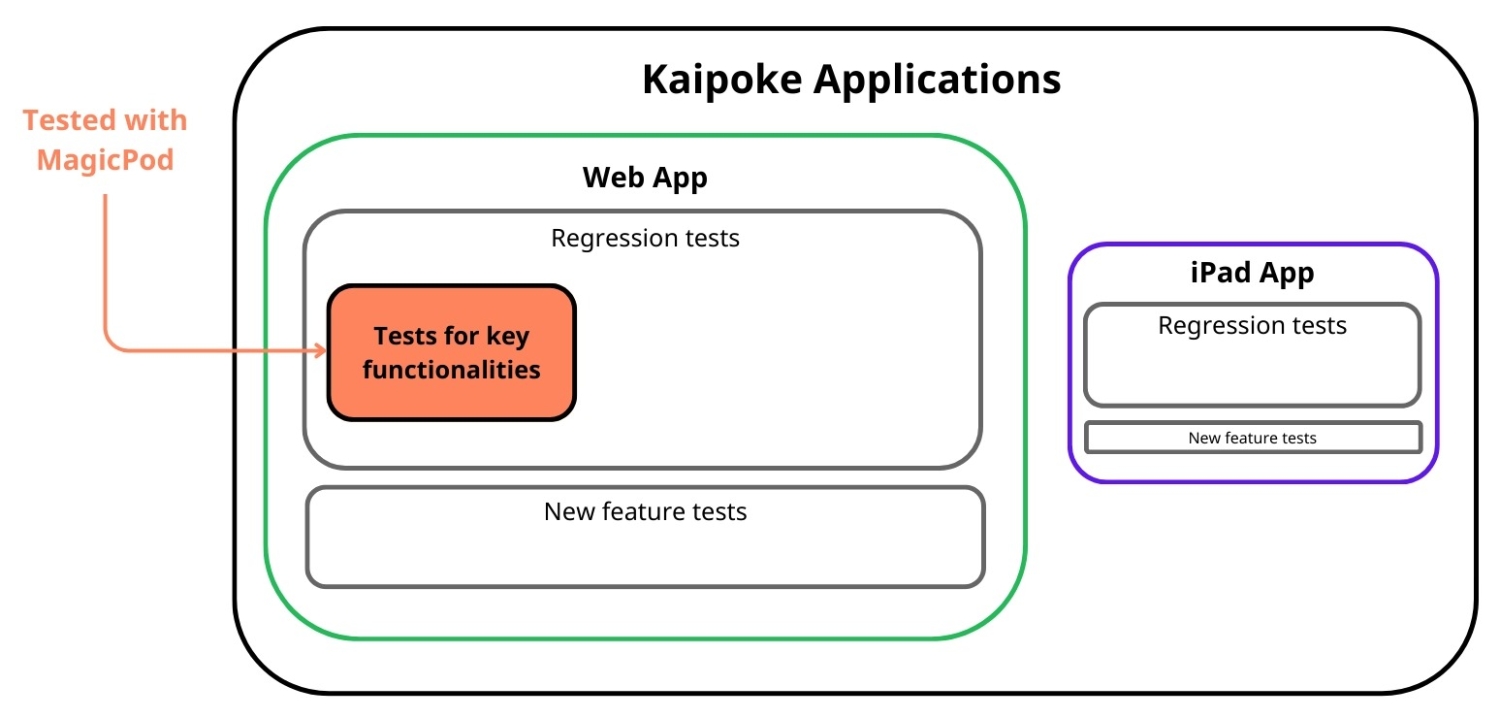

Kaipoke is a cloud-based management support platform that streamlines operations for nursing care providers through digital tools and automation. It operates as a web application and a native app (iPadOS), but the QA team focuses on E2E test automation for the web platform. To minimize maintenance costs, they primarily automate regression tests for features and user interfaces that do not frequently change. These regression tests verify that existing functionalities remain unaffected by new additions or modifications to the application.

Since covering every feature would result in an overwhelming number of test cases and huge maintenance efforts, the Kaipoke QA team prioritizes core functionalities and happy-path scenarios. Their goal is not to find bugs, but to confirm that the system continuously operates as expected.

Scope of testing of Kaipoke applications

Optimizing MagicPod Testing

In the past, the team saw issues with increasing implementation and maintenance costs, overreliance on specific individuals to keep the system running, and unstable tests. To optimize their test automation process, the team implemented several strategies.

Goal: Reduce Costs of Test Creation & Maintenance

The Kaipoke QA team used the following three MagicPod features to save time and manpower for test creation and maintenance.

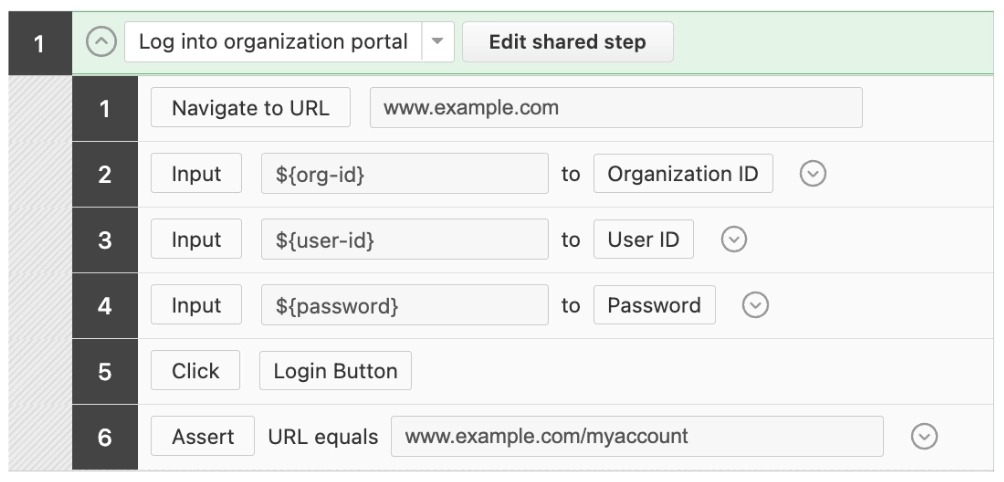

Shared Steps (Standardization)

Saved steps that were used in multiple test cases as shared steps, standardizing them.

See documentation on shared steps here.

Shared step

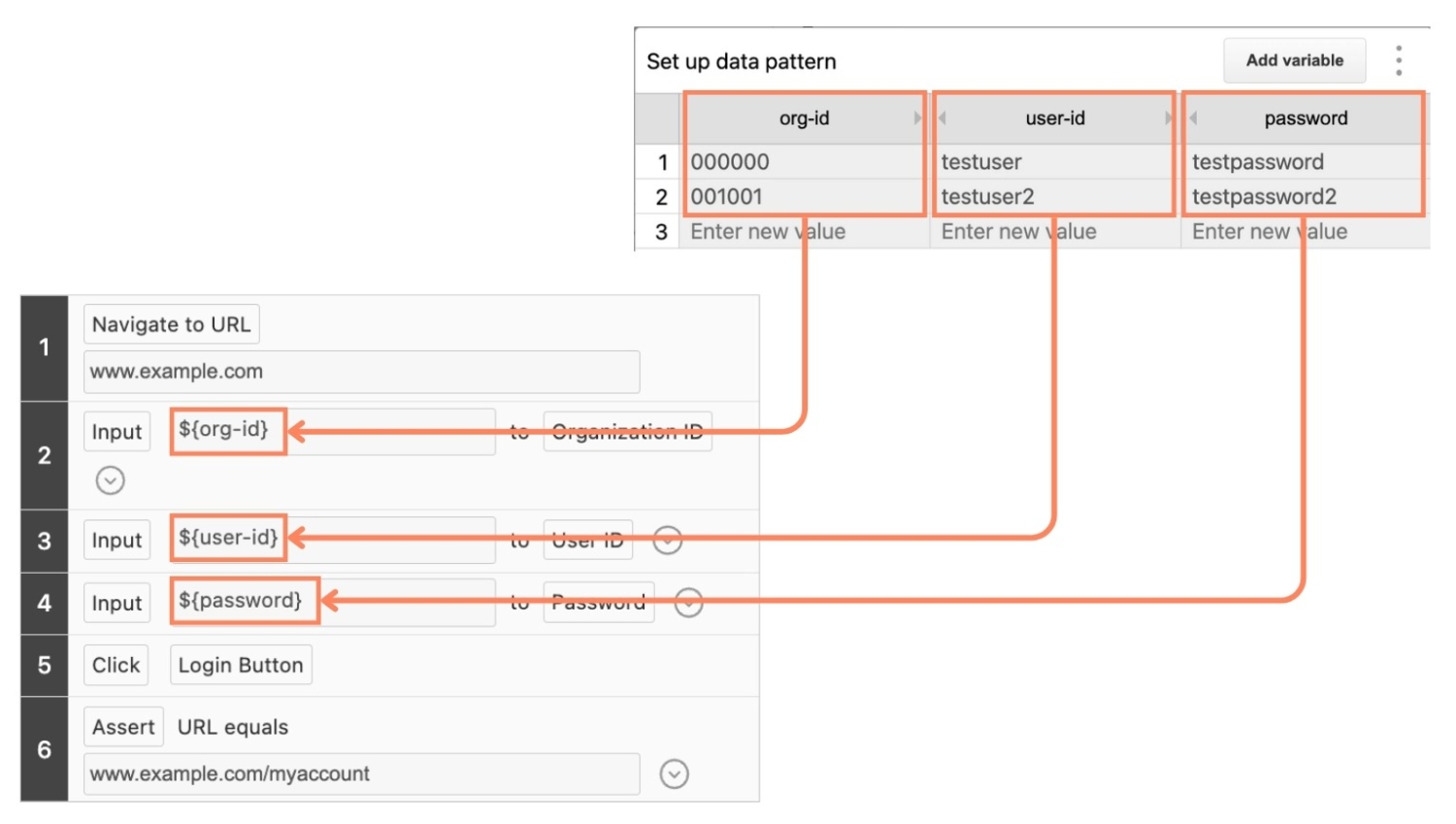

Variables

Use variables for data and locators that change depending on test cases or test environments.

Learn more about variables in MagicPod here.

Using variables

Data-Driven Testing

Leverage data-driven testing for tests created to verify outputs. Execute the same test case multiple times with different inputs and expected values.

See documentation on data-driven testing in MagicPod here.

Goal: Avoid Knowledge Silos & Ensure Knowledge Sharing

To avoid dependency on a few individuals to keep the test automation process going, the team created guidelines that document practices and rules for the whole team. The guidelines cover topics such as:

- When to create shared steps and use variables

- How to integrate tests into their process

- How to use comments

- Naming conventions for test cases, shared steps, and locators

- Standard procedure for selecting locators

Goal: Ensuring Test Execution Stability

Test Independence

To prevent failures caused by dependencies between tests, each test case is designed to run independently. After each test execution, cleanup processes (such as deleting test-generated data) ensure a fresh test state.

Retry Mechanism

Automated tests often fail due to timing issues or external factors rather than actual bugs. Instead of manually re-running failed tests, the team uses MagicPod’s built-in retry feature for automatic re-execution. They also implement initialization procedures (e.g. deleting test-generated data) to ensure tests can restart properly in case of failures.

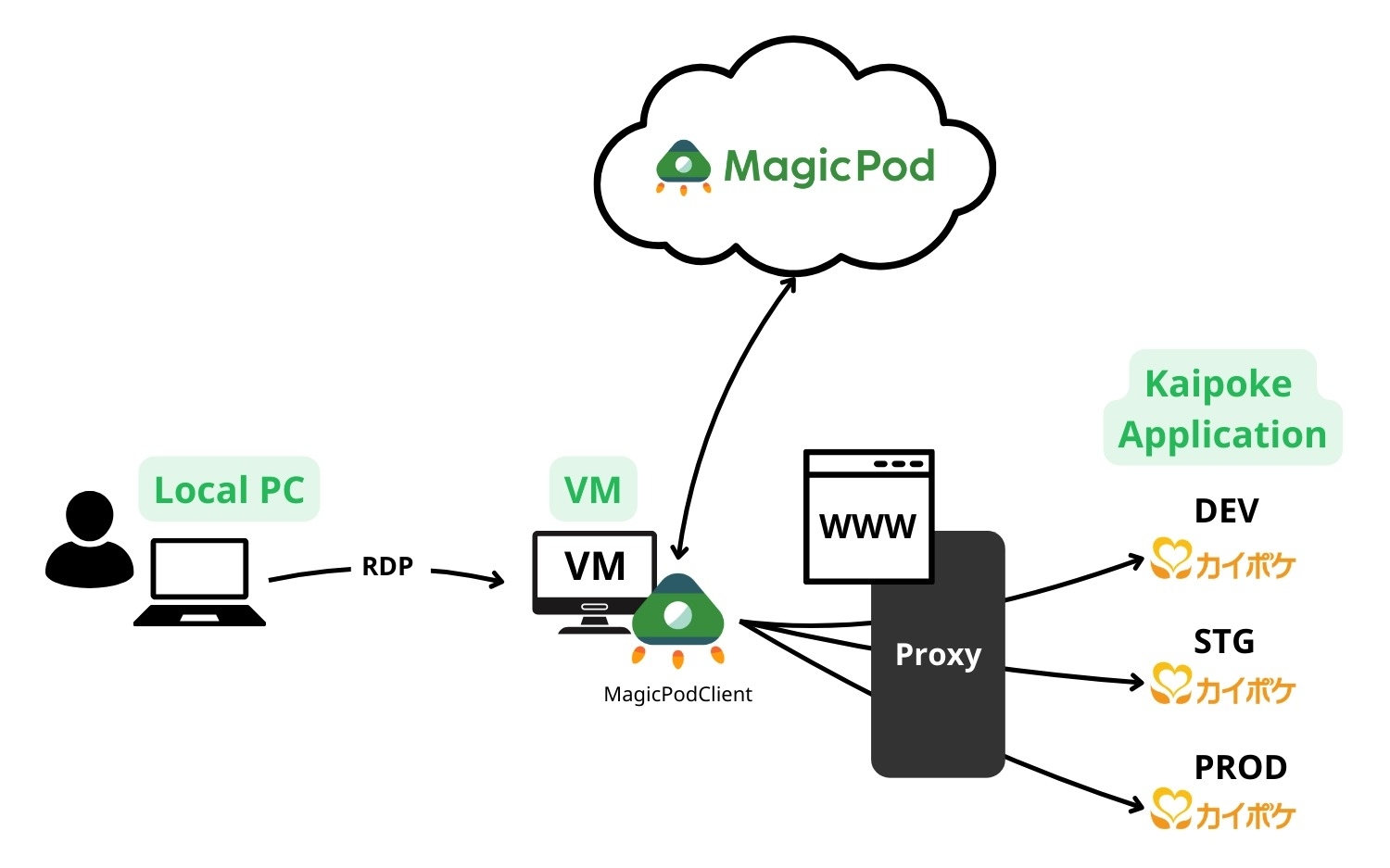

Migrating Test Execution to a Virtual Environment

The team initially ran tests on local PCs, but this approach had several challenges. The test would take control of the browser, interrupting other tasks, and a stable network connection was essential—especially in a remote work environment—since even brief disruptions could cause tests to fail. To resolve these issues, they migrated the test execution environment to a virtual setup, ensuring more stable and reliable operation.

Test Execution Timing

The team currently executes automated tests whenever system modifications or middleware version upgrades are made. Over time, the frequency of automated test executions has increased.

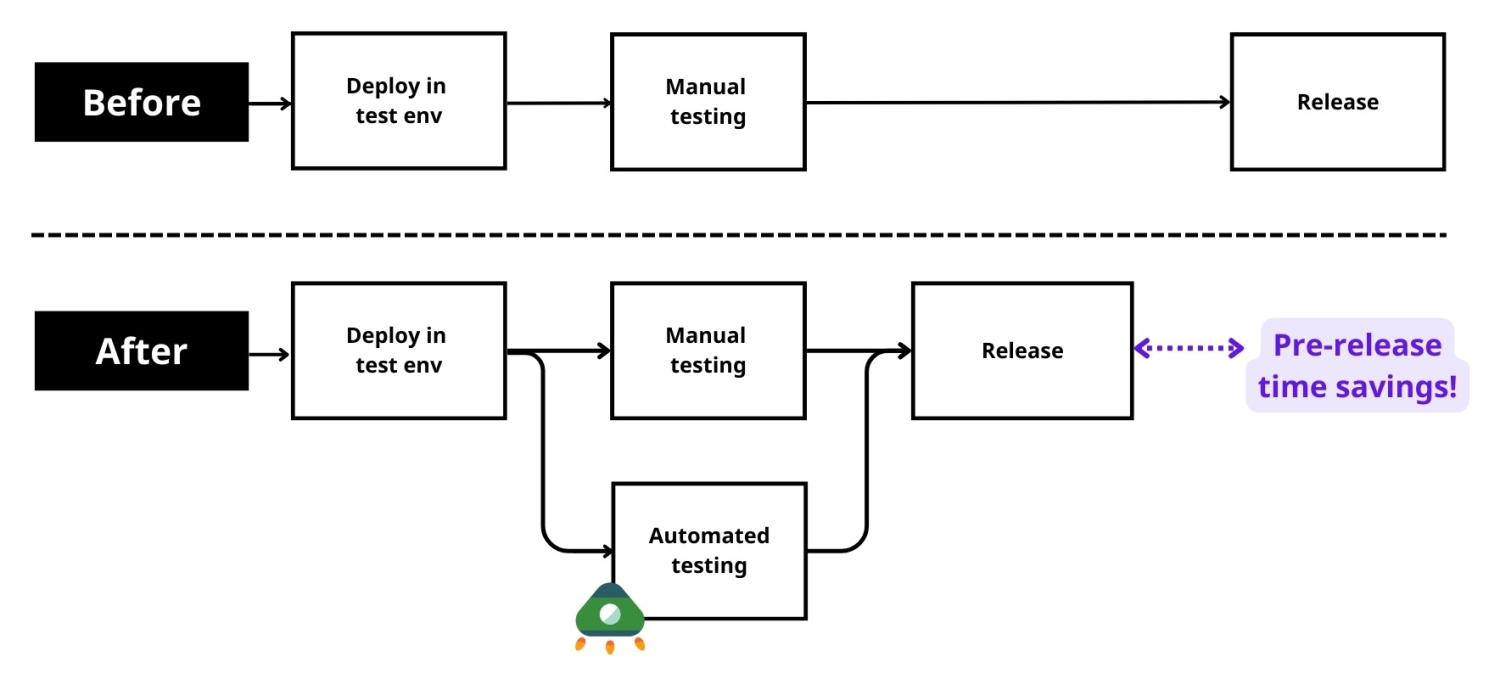

Before automation, manual testing took up substantial amounts of time, or were even skipped due to time constraints. Now, with automation, the team can now run tests quickly and consistently without human intervention, leading to improved confidence in quality assurance and faster releases.

Testing process

Future Improvements

Although the Kaipoke QA team has made tremendous progress with implementing test automation, they have identified several challenges that remain to be resolved.

- So far, test automation has mainly been adopted by one team. Due to resource constraints and skill gaps, widespread adoption across teams is still limited. Since regression testing is crucial for all teams, the team hopes to gradually expand test automation coverage.

- MagicPod provides a scheduled execution feature, but it is only available for cloud execution. Since the Kaipoke team mainly executes tests on local PCs, they would like to develop their own scheduling mechanism.

- As the number and length of test cases increase, so does the test execution time. They plan to optimize in the future by reviewing test cases and introducing parallel execution.

Conclusion

By strategically optimizing their MagicPod workflow, the Kaipoke QA team has improved test stability, reduced maintenance costs, and streamlined their automation processes. Their approach—leveraging shared steps, variables, and test independence—offers valuable insights for other teams looking to enhance their own test automation.

Thank you Mr. Nakamura, for sharing this peek into the Kaipoke QA team's workflow!